Earthquake for Big Tech: Juries Hit Meta and YouTube with Multi-Million Dollar Verdicts Over Youth Social Media Addiction

March 26, 2026 /Mpelembe Media/ — A landmark legal shift is currently unfolding as social media giants face unprecedented liability for the mental health impacts of their platforms on minors.

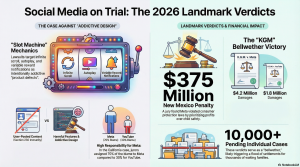

Landmark Jury Verdicts In a first-of-its-kind bellwether trial in Los Angeles, a jury ordered Meta and Google (YouTube) to pay $3 million in compensatory damages and recommended an additional $3 million in punitive damages to a 20-year-old woman, known in court as K.G.M. or Kaley. The jury found that both companies acted negligently and with malice, oppression, or fraud by designing platforms that addicted the plaintiff as a child, exacerbating her depression, anxiety, and body dysmorphia. Meta was assigned 70% of the responsibility for the harm, while YouTube bore 30%. TikTok and Snap, initially named as co-defendants, settled the claims against them just before the trial began.

This decision came on the heels of a separate verdict by a New Mexico jury, which fined Meta $375 million for violating state consumer protection laws by misleading the public and failing to safeguard children from sexual exploitation and mental health harms.

The “Addictive by Design” Legal Strategy Plaintiffs have successfully bypassed Section 230 of the Communications Decency Act—a law that traditionally shields tech companies from liability regarding user-generated content—by shifting the focus to the inherent design and architecture of the platforms. Lawyers successfully argued that features like infinite scroll, autoplay, ephemeral content, beauty filters, and intermittent push notifications are not neutral tools, but rather defective products engineered to trigger dopamine responses and foster compulsive, slot-machine-like use.

Damning Internal Evidence These lawsuits have drawn frequent comparisons to the historical litigation against Big Tobacco. During the trial, juries were shown internal documents revealing that the companies were aware of the psychological harms their platforms caused but prioritized growth and engagement over user safety. For example, an internal YouTube strategy memo explicitly stated, “If we want to win big with teens, we must bring them in as tweens,” while an Instagram employee referred to the company’s staff as “basically pushers” who were “causing reward deficit disorder”. Meta CEO Mark Zuckerberg testified at the trial, though jurors reportedly felt his testimony was evasive and did not “sit well” with them.

A Massive Nationwide Legal Battle These verdicts represent just the beginning of a massive wave of litigation. Over 2,000 personal injury cases and 800 school district lawsuits have been consolidated into a federal multidistrict litigation (MDL No. 3047) in Northern California. School districts are suing to recover the massive financial burdens of addressing the student mental health crisis, behavioral issues, and property damage linked to social media addiction. Concurrently, over 40 state attorneys general are actively pursuing legal action against the platforms.

Global and Legislative Ripple Effects The mounting legal pressure coincides with aggressive regulatory efforts globally. Australia recently enacted a nationwide ban on social media for children under 16. Meanwhile, several U.S. states, including New York and California, have passed landmark laws aiming to restrict “addictive feeds” and mandate mental health warning labels for minors.

Social Media’s Big Tobacco Moment: How the Infinite Scroll Became a Predatory Engine

1. The Hook: The End of Digital Invincibility

For over a decade, the “infinite scroll” was marketed as the ultimate digital convenience—a seamless, friction-free way to stay connected. But in 2026, the tech industry’s shield of invincibility finally shattered. In the span of a single week, back-to-back landmark verdicts in New Mexico and Los Angeles reclassified social media from a harmless pastime into a precision-engineered liability. The infinite scroll has been stripped of its immunity and reclassified as a predatory engine. These trials represent a historic shift: the moment the “Wild West” of the attention economy was forced to face a jury of its peers.

2. The “Digital Opioid” Comparison is Now a Legal Reality

The courtroom victory for plaintiffs wasn’t built on a critique of “bad content,” but on a profound neurological indictment. During the “K.G.M.” trial in Los Angeles and expansive school district litigation, attorneys successfully framed social media as a “digital opioid.” This wasn’t just rhetorical flourish; it was a clinical argument supported by experts like Stanford’s Dr. Anna Lembke, who testified that platform reward mechanisms exploit the same dopamine pathways as chemical substance addiction.By comparing social media to heroin, plaintiffs shifted the definition of “negligence” away from a failure of parenting and toward a fundamental failure of product safety. This shift was led by powerhouse litigators like Jayne Conroy, who famously held pharmaceutical companies responsible for the opioid epidemic. Conroy’s pivot from Big Pharma to Big Tech signaled that the legal world now views dopamine-driven engagement as a public health crisis on par with narcotics.”The medical science is not really all that different, surprisingly, from an opioid or a heroin addiction. We are all talking about the dopamine reaction… These companies knew about the risks, they have disregarded the risks, they doubled down to get profits from advertisers over the safety of kids.” — Jayne Conroy, Plaintiff Attorney

3. It’s Not the Content, It’s the Craft: Bypassing Section 230

Historically, Big Tech has hidden behind Section 230 of the 1996 Communications Decency Act, claiming they are merely “passive conduits” for what users post. The 2026 verdicts effectively neutered this shield by focusing on Design Defects rather than user-generated content. Like the tobacco litigation before it, the product’s delivery mechanism—the “cigarette” itself—was put on trial, not the “smoke” (the content).Plaintiffs argued that the platforms are defective by design, specifically identifying features engineered to bypass human willpower:

Infinite Scroll: Eliminates natural stopping cues to exploit adolescent impulsivity.

Autoplay: Forces a continuous loop of consumption without active user choice.

Variable Reward Notifications: Mimics slot machine mechanics to trigger compulsive, high-frequency checking.This strategy shifts the burden of responsibility from the user directly to the engineer. As Sarah Kreps of Cornell University’s Tech Policy Institute noted, the significance of these cases lies in their role as a “bellwether” for thousands of pending suits, proving that product liability is the new frontline in tech regulation.”The reason why this case is consequential is not the individual case, but the way that it’s a bellwether test case that might guide the resolution of other lawsuits… using a novel product liability strategy.” — Sarah Kreps, Cornell University

4. The “Smoking Gun” Internal Records

The New Mexico jury found Meta’s “profit over safety” narrative to be “unconscionable” after internal records revealed a chilling level of corporate awareness. Juries were shown a 2016 internal email in which Meta staff argued against telling parents what their children were viewing because doing so would “ruin the product from the start.”The evidence went deeper, revealing that Meta was aware of “sextortion” schemes targeting minors on Instagram as early as 2019. Most damning was the revelation that Meta’s growth teams prioritized engagement metrics over a safety team suggestion to make teen accounts private by default—a move internal data estimated would have prevented 5.4 million unwanted interactions daily . Instead, they chose the profit.”This was known… This was not an accident. This was not a coincidence. This was a foreseeable consequence of the deliberate design decisions that Meta made.” — Matthew P. Bergman, Social Media Victims Law Center

5. The Scale of the Reckoning: Beyond Individual Plaintiffs

The 2026 verdicts are the opening salvo in a massive, institutionalized litigation wave. The sheer breadth of the legal onslaught indicates that this is an inflection point for the industry:

41+ State Attorneys General: A bipartisan coalition has moved to hold Meta and others accountable for consumer protection violations.

800 School Districts: These districts are seeking to recover the massive economic costs of the youth mental health crisis—money spent on additional counseling, specialized safety staff, and mental health interventions necessitated by platform-driven addiction.

The Bellwether Effect: These first successful cases provide a roadmap for thousands of individual and class-action lawsuits currently moving through the system.

6. “We Wanted Them to Feel It”: The Symbolic Power of the Penalty

While Meta reported a staggering $201 billion in revenue in 2025, the 2026 penalties were designed to send a message of moral rebuke. New Mexico leveled a $375 million penalty, while the Los Angeles jury in the K.G.M. case awarded $6 million—half of which was specifically in punitive damages for “malice, oppression, or fraud.”Crucially, the Los Angeles jury’s breakdown of liability highlights a nuanced view of the defendants: Meta bore 70% of the responsibility ( $4.2 million), while YouTube was assigned the remaining 30% ($ 1.8 million). This suggests that juries increasingly view Meta’s Instagram as the more potent “addiction engine.” The inclusion of punitive damages is the real death knell for the “we didn’t know” corporate defense; it signifies that the jury found the companies’ conduct was not just negligent, but intentionally harmful.”We landed on the $6 million in damages… we still wanted the companies to understand they felt their practices were not acceptable. We wanted them to feel it.” — Anonymous Juror, Los Angeles Case

7. Conclusion: A New Social Contract for the Digital Age

The verdicts of 2026 signal the twilight of the “Wild West” era for Big Tech. While U.S. federal regulation continues to move at a glacial pace, the courts are effectively drafting a new social contract. By treating digital platforms as physical products subject to strict safety standards, the legal system is finally catching up to the psychological realities of the 21st century.The era of digital invincibility is over. We are now left with a fundamental question: As we strip away the immunity of these predatory engines, what will the new standards for our children’s digital well-being look like—and will the platforms finally choose human safety over a 5.4-million-interaction “hit”?