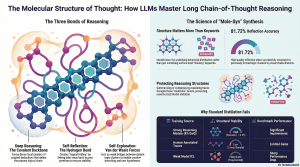

Feb 22, 2026 /Mpelembe media/ — This research explores the structural stability of Long Chain-of-Thought (CoT) reasoning in large language models by using a chemical bond analogy. The authors identify four primary reasoning behaviors—normal operation, deep reasoning, self-reflection, and exploration—which act as “bonds” that stabilize the logical progression of a model. By applying mathematical modeling and Gibbs–Boltzmann energy distributions, the text demonstrates how self-correction and hypothesis branching prevent “hallucination drift” and ensure self-consistency. Comparative testing across various models, such as LLaMA and Qwen, reveals that high structural correlation between reasoning chains is necessary for maintaining performance. The study also utilizes Sparse Auto-Encoders and t-SNE visualizations to map the geometric compactness of these thought processes in embedding space. Ultimately, the findings suggest that semantic compatibility and rigid cognitive architectures determine a model’s ability to solve complex mathematical and scientific problems.

The Cold-Start Mystery of Long-Form Logic

We have entered a new “reasoning era” in artificial intelligence, defined by models like DeepSeek-R1 and QwQ that navigate complex, multi-step problems through extended Chains of Thought (Long CoT). However, a persistent “cold-start” mystery remains: why do standard distillation methods and human-written solutions fail to teach smaller models how to “think” for long durations?The data reveals a stark divergence between human logic and machine reasoning. While humans generate step-by-step solutions intuitively, human-annotated traces are often ineffective at inducing the necessary “folding” behaviors in LLMs. Empirical analysis confirms that standard supervised fine-tuning (SFT) using randomly sampled Long CoT examples results in structural instability—models lose coherence or fail to transfer patterns. The breakthrough discovery is that effective reasoning is not a linear sequence of steps; it is a folded molecular structure . To train a model in Long CoT, one must replicate the underlying “chemical” bonds that hold the logic together.

Takeaway 1: Reasoning is Held Together by Three Specific “Chemical Bonds”

Effective Long CoT reasoning is characterized by a stable distribution of three core behaviors. In the Transformer architecture, these bonds are not merely metaphors; they correspond to Gibbs-Boltzmann distributions within the attention mechanism. The attention energy ( $E$ ) of these bonds determines the stability of the reasoning chain.

Deep Reasoning as Covalent Bonds: These form the logical “backbone” of the thought process, defining the primary chain. They possess the highest effective bond energy ( $D_d$ ), encoding strong logical dependencies where Step A rigorously justifies Step B.

Self-Reflection as Hydrogen Bonds: These “fold” the reasoning process back on itself. Similar to how intra-chain hydrogen bonds stabilize proteins, reflection creates long-range links where later steps test, revise, or reinforce earlier premises. These bonds have intermediate energy levels that turn linear sequences into self-consistent, folded structures.

Self-Exploration as Van der Waals Forces: These are the weakest effective bonds ( $D_e$ ), acting as transient bridges. They allow the model to probe new concepts and low-commitment associations in the semantic space before stronger logical constraints are enforced.”Effective and learnable Long CoT trajectories feature stable molecular-like structures… which are formed by three interaction types: Deep-Reasoning (covalent-like), Self-Reflection (hydrogen-bond-like), and Self-Exploration (van der Waals-like).”

Takeaway 2: Keywords are Red Herrings—Structure is Everything

A common misconception is that models learn to reason by imitating keywords like “Wait” or “Let’s think.” However, Sparse Auto-Encoder (SAE) analysis reveals that SFT actually carves out “dedicated latents” for discourse-control structures (such as “Alternative” or “But”).Models internalize the behavioral distribution rather than surface-level lexical cues, leading to the concept of Semantic Isomers . These are reasoning chains that visit the same semantic regions but utilize different bond distributions. The data confirms that a “Semantic Isomer” is only effective if its underlying bond structure promotes fast entropy convergence . If the entropy does not converge, the reasoning “molecule” becomes unstable, regardless of the keywords used.

Takeaway 3: Why Human Logic and Weak Distillation Fail

Empirical analysis confirms a “sharp drop” in performance when models attempt to learn from human-annotated traces. The failure stems from a fundamental mismatch in reasoning dynamics. Humans reason with a “uniform forward information gain,” whereas strong models like R1 utilize metacognitive oscillation .This oscillation involves alternating between High-entropy exploration (where the phase-space slope $m_t > 0.6$ ) and Low-entropy validation (where $m_t \approx 0$ ). Human reasoning lacks this machine-specific dynamic, maintaining a near-zero slope that fails to provide the “folding” signals models need.| Feature | Human Reasoning Dynamics | R1 / Long-CoT Dynamics || —— | —— | —— || Information Gain | Uniform forward gain (81.3% of cases) | Accelerating informativeness (76.1% of cases) || Entropy Profile | Near-zero slope ( $m_t \approx 0$ ) | Metacognitive oscillation ( $m_t > 0.6$ cycles) || Stability Mechanism | Iterative self-monitoring | Self-reflective “folding” & reward maximization |

Takeaway 4: The Danger of “Structural Chaos” in Model Mixing

When researchers attempt to fuse data from different stable reasoning models (e.g., mixing DeepSeek-R1 and OpenAI-OSS data), the result is a decline in performance due to the rigidity of the cognitive architecture.The research identifies these models as “Allotropic Variants” —structurally stable but incompatible. Forcibly mixing them doesn’t just lower accuracy; it results in “structural chaos.” The model cannot converge on a single stable behavioral mode, leading to fragmented, low-utility outputs. Statistical similarity between models does not guarantee structural compatibility.

Takeaway 5: Mole-Syn—Synthesizing Intelligence from Scratch

To overcome the limitations of distillation, the Mole-Syn methodology introduces a way to generate effective Long-CoT structures without direct teacher data. Mole-Syn performs a “random walk on a transition probability graph” composed of the four core behaviors: Normal, Deep, Reflection, and Exploration.By transferring the distribution-transfer-graph ( $\mathbf{P_C}$ ) and marginal distribution ( $\mathbf{\pi_C}$ ) from stronger models, Mole-Syn guides instruction-level LLMs to synthesize their own stable logic. This approach has boosted Reinforcement Learning (RL) stability and performance across six major benchmarks, including GSM8K , MATH-500 , AMC 2023 , and the AIME 2024/2025 invitational exams.

Takeaway 6: The Secret Weapon of Private AI—Breaking the Bonds

Top-tier AI labs protect their proprietary models through a “defense-in-depth” strategy that involves “breaking” the molecular bonds of reasoning. By applying summarization and reasoning compression , labs can provide correct answers while stripping away the internal error-bounded transitions.Data confirms that a 45% token reduction via summarization is the “breaking point” where the molecular structure of reasoning collapses. Without the full “hydrogen bonds” of reflection and “covalent bonds” of deep logic, an imitating model cannot reconstruct the internal logic, effectively preventing unauthorized behavioral cloning through distillation.

Toward a “Folding” Theory of Mind

The shift from viewing AI as a “text generator” to a “molecular constructor” of logic marks a significant evolution in our understanding of machine intelligence. The AI’s search for a solution is remarkably similar to a “protein’s descent along a folding funnel” toward a low-energy native state.This “folding” theory suggests that reasoning is not a linear string of tokens, but a complex, folded topology of behaviors. The quest for AGI may well depend on our ability to discover more complex “bonding” behaviors, mapping the increasingly intricate folding of thought itself.