Maximizing AI Accuracy: Automating Workflows with the Vertex AI Prompt Optimizer

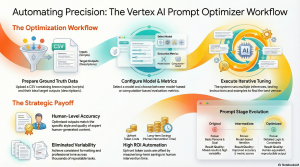

23 Feb. 2026 /Mpelembe Media/ — The Vertex AI Prompt Optimizer is a tool designed to refine AI instructions automatically using ground truth data. By comparing initial outputs against high-quality examples, the system iteratively adjusts system prompts to achieve greater accuracy and consistency. The author illustrates this process through a Firebase case study, where the tool was used to transform rough video scripts into professional YouTube descriptions. Although the optimization process requires an upfront investment in time and tokens, it significantly reduces the need for manual human intervention. Ultimately, the source highlights how data-driven optimization can replace trial-and-error prompting with a more reliable, automated workflow.

For most organizations, “prompt engineering” has devolved into a grueling, unscientific cycle of manual trial and error. Teams spend countless hours tweaking adjectives and adjusting temperatures, only to be met with inconsistent outputs that drift from the brand voice or fail at basic logic. This “prompt engineering fatigue” is more than a frustration; it is a significant drain on technical resources—an “iteration tax” paid in human hours for mediocre results.The Vertex AI Prompt Optimizer mandates a fundamental shift in this paradigm. By moving away from manual guesswork, it provides a rigorous, data-driven shortcut to high-accuracy, reproducible model outputs. Using the power of Gemini 2.5 Flash within a Colab Enterprise notebook, the optimizer transforms prompt creation from a subjective art into a repeatable engineering discipline.

Moving from Intuition to “Ground Truth” Data

The most significant evolution offered by the Vertex AI Prompt Optimizer is the transition from “vibe-based” prompting to dataset-driven development. The process centers on a “target” column—a collection of human-generated excellence that serves as the ground truth. By providing the AI with a series of known input and output values, the optimizer can programmatically validate whether a prompt iteration is actually improving or simply changing.From an ethical and strategic perspective, this shift redefines the human role. As an ethicist, I must emphasize that this transition places a new burden of responsibility on the human lead: the “human-generated excellence” used as the benchmark must be rigorously vetted for bias and accuracy. Because the optimizer scales these values automatically, the quality of your initial data curation determines the integrity of your entire automated workflow.

The Logic of Synthetic Thinking (150 Words Per Minute)

A human engineer might instruct an AI to “be accurate with timestamps,” but the Vertex AI Prompt Optimizer identifies the specific mathematical dependencies required to achieve that goal. In a recent experiment involving YouTube scripts, the optimizer didn’t just suggest a better tone; it synthetically derived a logical framework for calculating duration based on word count—a level of granular instruction a human might never define so strictly.In the “Latest prompt with output” section of the optimization results, the tool generated the following mathematical instruction set:”Assume an average speaking pace of 150 words per minute to convert the total word count into the estimated total video duration. Then, for each major section, calculate its word count. Determine each timestamp by its cumulative word count relative to the start of the video, converted into minutes and seconds using the same 150 words per minute pace.”This “automated reasoning” demonstrates how the optimizer transforms a vague request into a precise mathematical instruction set, ensuring synthetic outputs remain grounded in objective logic.

The “Instructions and Demo” Optimization Mode

The technical methodology of the optimizer utilizes a sophisticated “instructions and demo” mode. In this state, Gemini 2.5 Flash optimizes the system instructions and then systemically tests whether “few-shot” prompting—including specific examples within the prompt—actually moves the needle on performance metrics.Strategic value is found in the tool’s ability to balance two distinct types of evaluation:

Model-based metrics: These use Gemini to assess subjective qualities like brand voice, tone, and helpfulness.

Computation-based metrics: These use ground truth data to mathematically calculate the success of the optimization, vital for objective accuracy like the aforementioned timestamp calculations.By selecting the right metric for the task, developers ensure the model isn’t just “dreaming” up a better prompt, but is being held to a rigorous, mathematical standard of success.

Quality Comes at an Upfront Premium

High-accuracy automation requires a strategic upfront investment. In the case of optimizing YouTube descriptions for a recurring release series, a dataset of 45 input prompts took roughly four hours to process and consumed millions of tokens. The total cost was approximately “a couple hundred dollars.”While $200 for a single prompt might seem high, the ROI is found in the elimination of the “iteration tax.” The original manual prompt produced high variability, requiring a marketing person to review and edit every single output. By spending the resources upfront, the organization achieves a consistent, high-quality output that scales across months of content with minimal human intervention. As the source evaluation concludes:”The trade off on the extra time allocated for creating the prompts was worth the tradeoff in token consumption costs.”

Eliminating “Conversational Filler” for Professionalism

Human-written prompts often accidentally encourage the AI to include “pleasantries” that clutter professional workflows. The Vertex AI Prompt Optimizer explicitly identifies and removes these AI “filler” phrases.The optimized instructions specifically demand the removal of conversational introductory or concluding phrases, such as “Here’s a compelling YouTube description.” Furthermore, it goes a step further in technical cleanliness by instructing the model to remove extraneous separators like “—” that are often used by models to organize thoughts but serve no purpose in a final, scannable YouTube description. This results in a “clean” output that is immediately ready for publication in a professional technology organization, where directness and efficiency are the primary requirements.

The Future of the “Prompt Engineer”

The capabilities of the Vertex AI Prompt Optimizer signal the end of the “prompt engineering” era as we know it. We are moving away from a world where humans manually craft sentences for AI, and toward a future where the human’s primary value lies in data curation and quality management.The role of the human is evolving: the “prompt engineer” is becoming a “data quality manager.” The critical task is no longer the phrasing of the instruction, but the curation of the “ground truth” data that defines excellence. Organizations must now evaluate their own workflows: are your manual iterations worth the hidden tax you are paying in time and inconsistency, or is it time to let data drive your results?