Your Chatbot is Filing Reports to the Treasury: The Hidden Architecture of AI Surveillance

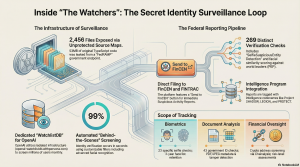

They told us the future would be convenient. “Sign up, verify your identity, talk to the machine.” The marketing materials promised a frictionless bridge to the next era of human productivity, wrapped in the comforting, sterile language of “Trust and Safety.” But the “Safe AGI” brochure didn’t mention the clinical reality lurking behind the interface.A massive investigation into leaked infrastructure and unprotected source maps has revealed the smoking gun. While you are asked for a selfie to “ensure safety,” the underlying architecture is running a module called SelfieSuspiciousEntityDetection. We have found that the same identity machine processing your ChatGPT login is a sophisticated surveillance tool designed to monitor financial crimes, track “suspicious” biometric profiles, and file reports directly to the federal government.

The “Watchlist” Existed 18 Months Before You Knew

The narrative that AI identity verification is a recent necessity—a response to the power of new models—is a lie. Certificate Transparency (CT) logs reveal that the domain openai-watchlistdb.withpersona.com was operational as early as November 2023.The naming convention is a Freudian slip of the surveillance state. It wasn’t “openai-verify” or “openai-kyc”; it was a “watchlist database.” This infrastructure was live and rotating SSL certificates for 27 months before the public ever heard a word about it. OpenAI didn’t announce “Verified Organization” requirements until mid-2025, yet as the source material confirms: “OpenAI didn’t announce ‘Verified Organization’ requirements until mid-2025… but the watchlist screening infrastructure was operational 18 months before any of that was disclosed.” The architecture for your monitoring was built before the justification for it was even invented.

The Direct Line to FinCEN and FINTRAC

The leak reveals that this identity platform isn’t just a gatekeeper for AI; it is a reporting node for the state. We found the smoking gun in the dashboard’s source code: a “Send to FinCEN” button. This allows operators to file Suspicious Activity Reports (SARs) directly with the U.S. Treasury’s Financial Crimes Enforcement Network.The system validates data against FinCEN XML schemas and tracks reports from “Pending” to “Accepted.” Across the border, the platform files Suspicious Transaction Reports (STRs) to Canada’s FINTRAC, tagging them with intelligence program codenames straight out of a Tom Clancy novel: Project ANTON , Project ATHENA , Project CHAMELEON , Project GUARDIAN , Project LEGION , Project PROTECT , and Project SHADOW .There is a delicious, dark irony here: government operators are now using “AskAI”—an OpenAI-powered copilot found in the code—to help them process surveillance data and financial reports on OpenAI’s own users.

The Biometric Panopticon: 269 Checks for a Simple Prompt

When you provide your biometric data, you are entering a digital panopticon. The file lib/verificationCheck.ts reveals a staggering 269 individual checks performed on a single user. These aren’t simple age checks; they are a deep-dive behavioral and biometric profile:

- SelfieSuspiciousEntityDetection: An internal flag that determines if your face looks “suspicious” based on undisclosed criteria.

- SelfiePublicFigureDetection: A check to see if you resemble a famous or influential person.

- Deceased Detection: Cross-referencing your data against the SSA Death Master File to ensure you aren’t a ghost in the machine.

- SelfieExperimentalModelDetection: The system is using live user biometrics to test “Experimental ML models” without user knowledge or consent.

- Adverse Media Screening: You are screened against 14 categories of “adverse media,” ranging from terrorism and financial fraud to espionage.Perhaps most damning is the “retention lie.” While OpenAI’s public disclosures claim biometric data is stored for “up to a year,” the source code in AddListModal.tsx reveals a hardcoded 3-year (1095 days) retention period. In states like Illinois, where the Biometric Information Privacy Act (BIPA) mandates strict consent and transparency, these $1,000 to $5,000-per-violation fines could quickly turn into a multi-billion dollar legal nightmare.

You vs. The World Leaders (Facial Similarity Scoring)

One of the most invasive features discovered is the Politically Exposed Person (PEP) facial recognition system. The platform doesn’t just check your name against a list; it performs a side-by-side “Portrait Similarity” comparison of your selfie against reference photos of world leaders pulled from Wikidata.The system assigns a “Similarity Level” (Low, Medium, or High) to your face. As the investigation noted: “The machine looked at your face and asked itself: ‘does this person resemble the deputy finance minister of Moldova?’ and it answered.” If the machine decides you look too much like a Moldovan official or a distant relative of a diplomat, your data is flagged for “related PEP” investigations.

The “ONYX” Connection and the ICE Fingerprint

On February 4, 2026, a new subdomain appeared: onyx.withpersona-gov.com. The name matches “Fivecast ONYX,” a $4.2 million AI surveillance tool purchased by ICE to track “violent tendencies” and “sentiment shifts.”While the leaked code contains no direct immigration workflows, the technical “fingerprint” is undeniable. The government platform and the OpenAI-facing platform share the exact same codebase and Git commit hash: 8d454ac0dc48b2f4ae7addefa22e746079c30089.This shared infrastructure means that the civilian “safety” checks used by OpenAI and the federal surveillance tools used by agencies like ICE are two sides of the same coin. Your ChatGPT login and a federal “sentiment shift” track are running on the same heart.

The 53MB Security Fumble

In a crowning stroke of irony, this entire surveillance architecture was exposed because a “security and identity” firm failed to secure its own front door. We obtained 2,456 source files—the entire original TypeScript codebase—because 53MB of unprotected source maps were left on a FedRAMP-authorized government endpoint.By leaving the /vite-dev/ development server path active on a production site, the developers essentially left the master key and the instruction manual on the porch. Anyone with a browser could reconstruct the entire project tree. For a company trusted to handle sensitive government PII and biometrics, failing to understand how a Vite build works is a catastrophic incompetence that negates the very “trust” they sell.

The Cost of “Safe AGI”

The stated goal for these measures is “Safe AGI”—the claim that total surveillance is the only way to keep “bad actors” away from powerful models. But the application of this safety is often arbitrary. Consider the “Ukraine anomaly”: OpenAI continues to block users in Ukraine—a country not subject to broad OFAC sanctions—while claiming it is a legal necessity. It isn’t a legal requirement; it’s a policy choice made by an entity with too much power and zero transparency.We are moving toward a world where interacting with the future requires a permanent place on a government-accessible watchlist. As we’ve seen, once the infrastructure for a panopticon is built, it is never dismantled; it is only expanded.The findings force one final, uncomfortable question: If the price of interacting with the future is a permanent place on a government-accessible watchlist, are we truly “users,” or are we just data points for the watchers?