From Moon-Bats to Deepfakes: How Scientific Hoaxes Expose the Flaws in Our Information Ecosystem

April 1, 2026 /Mpelembe Media/ — The Evolution and Impact of Scientific Deception Throughout history, scientific hoaxes and misinformation have challenged our epistemological frameworks and tested the limits of institutional authority. While these deceptions have occasionally caused public harm, they also paradoxically serve as vital catalysts for improving methodological rigor, journalistic standards, and public media literacy.

Early pranks like the 1835 Great Moon Hoax (which claimed the discovery of bat-men on the moon) and the 1957 BBC Spaghetti Tree broadcast succeeded by exploiting the public’s unquestioning trust in emerging mass media and authoritative scientific voices. In the late 20th and early 21st centuries, the focus of scientific deception shifted to exposing vulnerabilities within academia itself. Stings such as the 1996 Sokal hoax, the 2018 “Grievance Studies” affair, and John Bohannon’s 2015 “chocolate diet” study systematically exposed ideological biases, flawed peer-review processes, and the dangerous rise of “pay-to-play” predatory journals. Even in legitimate journals, systemic pressures to “publish or perish” have led to an increase in research misconduct, such as fabricated data and citation cartels, further complicating the public’s ability to trust scientific literature.

The Modern Misinformation Crisis Today, the democratization of information via the internet and the advent of AI-generated “deepfakes” have fueled a global “infodemic,” threatening public health and democratic decision-making. The spread of modern misinformation is driven by several intersecting factors:

Cognitive and Emotional Drivers: Misinformation often elicits high-arousal emotions like fear or anger, making it spread faster than factual news. Additionally, online “echo chambers” reinforce confirmation bias and the “illusory truth effect,” where repeated exposure makes false claims feel true.

Socio-Political Factors: Structural inequalities, historical medical racism, and the decline of local science journalism have created “news deserts” and information voids that bad actors eagerly exploit. Furthermore, intense political polarization means that scientific issues are frequently viewed through partisan lenses rather than empirical merit.

Weaponized Uncertainty: Disinformation campaigns funded by specific interest groups (such as fossil fuel lobbyists or anti-vaccine organizations) deliberately manufacture doubt by promoting contrarian “experts” to create the illusion that there is no scientific consensus.

Strategies for Mitigation To rebuild trust and combat the spread of false claims, researchers advocate for a multi-pronged approach:

Promoting Scientific Media Literacy: Education systems must move beyond simply teaching scientific facts and focus on developing “competent outsiders”—citizens who may lack deep scientific expertise but possess the critical evaluative skills needed to judge the credibility of media reports and identify logical fallacies.

Psychological Inoculation: Also known as “prebunking,” this proactive strategy works like a medical vaccine. By preemptively exposing the public to weakened forms of misinformation and explaining the deceptive tactics used, educators and communicators can build mental resilience against future manipulation.

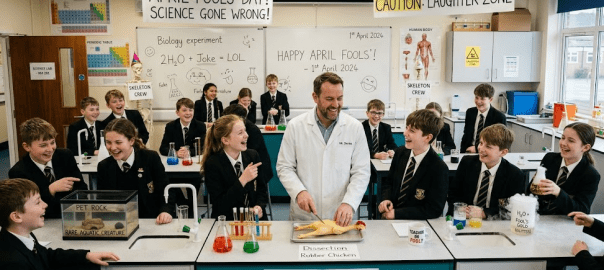

Ethical and Relatable Communication: Scientists are cautioned against using paternalistic “social engineering” or stealth “nudging” to force public compliance, as this can backfire and severely damage the scientific community’s reputation. Instead, experts should bridge the gap by stripping away heavy jargon, engaging in authentic storytelling, and responsibly utilizing humor and satire to make science more accessible, relatable, and trustworthy.

Why Your Brain Loves a Good Lie: 5 Shocking Lessons from History’s Greatest Scientific Hoaxes

1. The Day the World Harvested Spaghetti

On April 1, 1957, millions of British viewers sat down before the BBC’s Panorama , a bastion of sober, middle-class respectability. What they saw was a pastoral revolution: a family in the Swiss canton of Ticino plucking long, supple strands of spaghetti from the branches of “spaghetti trees.” Narrated with the terrifyingly earnest gravitas of Richard Dimbleby—the era’s most trusted voice—the report detailed a “bumper harvest” credited to a mild winter and the “virtual disappearance of the spaghetti weevil.”At a time when pasta was an exotic curiosity largely confined to tinned cans of tomato-soaked mush, the spectacle was intoxicating. Approximately eight million people watched as Swiss women dried the “crop” in the Alpine sun. The aftermath was a masterclass in the “information disorder” that would later define our digital age. Hundreds called the BBC for “growing tips.” The broadcaster’s response was a piece of beautiful cynicism, effectively telling the audience to get a grip: “Place a sprig of spaghetti in a tin of tomato sauce and hope for the best.”While a delightful prank, the spaghetti-tree hoax serves as more than a historical footnote. These are the red-team operations of the epistemic world—stress tests that unmask the structural rot in our cathedrals of knowledge and reveal the cognitive backdoors through which misinformation enters even the most logical minds.

2. The Authority Trap: When Prestige Blinds Logic

The enduring success of the “Spaghetti Tree” and the “Great Moon Hoax of 1835” highlights a psychological security flaw: the Illusory Truth Effect . This phenomenon occurs when technical jargon and institutional prestige act as a bypass for our critical thinking.In 1835, the New York Sun claimed that astronomer Sir John Herschel had discovered lunar bat-men and bipedal beavers through a 24-foot-diameter telescope lens. Author Richard Adams Locke didn’t just lie; he anchored his fiction in the authority of the “journalistic” medium, utilizing the emerging prestige of mass-print to lend a veneer of objectivity. Whereas the BBC relied on the broadcast authority of Dimbleby’s voice to sell a visual lie, Locke used a textual trick, dropping the names of real scientific journals to build an architecture of belief.As Michael Peacock, then editor of Panorama , observed of the power of a narrator:”Dimbleby knew they were using his authority to make the joke work… Dimbleby loved the idea and went at it eagerly.”When we lack baseline knowledge—whether it’s the origins of pasta in 1950s Britain or lunar optics in the 1830s—prestige overrides logic. We don’t just see the spaghetti; we see the man in the suit telling us it’s there.

3. The Predatory Paradox: Why “Peer-Reviewed” Isn’t Always a Safety Net

The professional scientific record is not a fortress; it is an ecosystem increasingly vulnerable to “institutional rot.” In 2015, science journalist John Bohannon proved this by manufacturing a study titled “Chocolate with High Cocoa Content as a Weight Loss Accelerator.”Bohannon’s methodology was a deliberate “red-team” attack. He used a tiny sample of just 15 people and utilized p-hacking —a process of measuring a vast array of variables to force a “statistically significant” but meaningless result. The study was “terrible science” by design, yet it was accepted by the International Archives of Medicine in just 24 hours for a fee of 600 Euros.This exposes the Predatory Paradox : the metrics we use to signal quality—like publication in a peer-reviewed journal—are being co-opted by entities that prioritize profit over empirical validity. Most damningly, science journalists failed as the “secondary peer-review layer,” allowing the story to go viral in 20 countries. Even after the retraction, the “citation tail” remains contaminated; out of 28 subsequent citations of the paper, 8 used it as legitimate scientific support . The lie has become part of the permanent scientific record.

4. The Mirror of Bias: We Only See What We Want to Believe

The most effective hoaxes don’t just deceive; they flatter. The “Piltdown Man” (1912–1953) succeeded for 41 years because it offered British researchers exactly what they craved: a “missing link” with a large human cranium and an ape-like jaw. This “brain-first” model was the favored evolutionary narrative in Britain at the time. Experts were so blinded by confirmation bias they failed to notice they were looking at a medieval human skull crudely glued to a modern orangutan jaw.This rush for “sexy” findings remains a persistent threat. In November/December 2010, NASA announced the discovery of “#arseniclife,” an organism that supposedly replaced phosphorus with arsenic in its DNA. While not an intentional hoax, it revealed a systemic fragility. Science editors, eager to break a story that would “impact the search for extraterrestrial life,” allegedly sent the paper to reviewers they knew would not be too critical .As Michael Eisen remarked on this institutional failure:”We need to get past the antiquated idea that the singular act of publication… should signal for all eternity that a paper is valid.”

5. The Jargon Cloak: Exposing the “Fashionable Nonsense”

In 1996, physicist Alan Sokal exposed a different kind of rot: the “Jargon Cloak.” Sokal submitted a nonsensical paper to the journal Social Text titled “Transgressing the Boundaries: Towards a Transformative Hermeneutics of Quantum Gravity.” The paper was salted with absurdity, claiming that physical reality is merely a social construct. Because it was wrapped in specialized jargon and ideological flattery, it was published as a serious academic contribution.This was mirrored in the 2017–2018 “Grievance Studies” affair, where twenty bogus papers—including a study based on “urban dog-park ethnography”—were submitted to academic journals. Seven were accepted. These hoaxes reveal a critical failure in academic gatekeeping: the moment ideological alignment replaces methodological rigor, the system becomes a playground for sophisticated parody.

6. The Solution: Building “Mental Antibodies” via Inoculation

In the face of these “digital wildfires,” our current educational model is failing. We are producing “marginal insiders”—students who can recite facts but cannot evaluate a claim. We must instead build competent outsiders : citizens capable of evaluating the credibility of experts even when the technical science is beyond their grasp.The defense is Inoculation Theory . Much like a biological vaccine, cognitive inoculation exposes the mind to a “weakened dose” of misinformation to build resistance. Crucially, this provides cross-protection : learning to spot a deceptive tactic in a medical hoax builds a “blanket of protection” that helps the individual recognize the same tactic in climate change denial or political propaganda.

Technique-based Inoculation: Training the brain to recognize logical fallacies, such as ad hominem attacks or the “Jargon Cloak,” used in fake news.

Fact-based Inoculation: Preemptively correcting common myths (e.g., refuting the myth that fast food doesn’t rot) with verified data.

Experiential Inoculation: Active learning, such as the “Burger Decay Myth” experiment, where students test “scientific-sounding” labels themselves to develop a healthy, hardened skepticism.

Conclusion: The Gift of the April Fool

The cost of a hoax is high—misdirected funds, lost trust, and skewed policy. But their value is the hardening of our epistemic defenses . These deceptions force our institutions to refine their verification standards and remind us that science is not a collection of finished facts, but a continuous, skeptical process.In an era of deepfakes and AI-driven infodemics, we must realize that our gullibility isn’t just a personal quirk; it’s a security flaw in the collective intelligence of our species . Is your skepticism sharp enough to protect you? Or are you still standing in the Swiss sunshine, waiting for the next spaghetti harvest? To survive the lie, you must learn to red-team the truth.