The 2026 AI Reckoning: 5 Takeaways That Are Redefining the Future of the Internet

Feb. 24, 2026 /Mpelembe Media/ – This report details how OpenAI internally questioned whether to alert authorities regarding the disturbing chat logs of a teenager who later committed a mass shooting in Tumbler Ridge, Canada. Although the suspect’s account was terminated months before the attack due to violent content, the company ultimately decided her behavior did not meet the specific threshold for an emergency police referral at that time. Beyond her interactions with artificial intelligence, the perpetrator had established a concerning digital history through violent simulations on Roblox and firearms-related posts on social media. The situation has reignited a broader debate concerning the ethical responsibilities of tech companies in monitoring user data to prevent real-world tragedies. Currently, the organization is cooperating with the Royal Canadian Mounted Police as investigators review the digital warning signs that preceded the event.

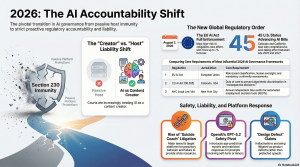

By early 2026, the digital sanctuary of Section 230 has collapsed under the weight of 5.5 billion users and the arrival of synthetic logic. The “move fast and break things” ethos of the 1990s, which governed an internet of forty million people, is officially obsolete. We have transitioned from an internet of “pipes”—passive conduits for user speech—to an internet of “brains,” where autonomous models generate, synthesize, and influence reality.The era of total immunity is over. As the legal system catches up to the speed of silicon, five takeaways are defining the new constitutional order of the digital age.

1. The End of “Intermediary” Immunity (The Section 230 Crisis)

Section 230’s original 26 words were designed to protect passive digital bookshelves. In 2026, however, Large Language Models (LLMs) have failed the “Material Contribution Test” established in the Roommates.com case. In that precedent, a platform lost immunity because it required users to provide discriminatory information.Applied to the LLM era, the parallel is stark. Unlike a blank text box on a message board, an LLM’s internal weights and training architecture require the model to make proactive choices about every next token it generates. This is not hosting; it is synthesis. By moving from “pipe to brain,” AI companies have become active creators of content rather than neutral curators.”Applying the same immunity that protects hosts of speech, like Facebook or Reddit, to systems that produce speech, like ChatGPT or Claude, is a fundamental categorical error. Treating LLMs as passive intermediaries misunderstands the core technical reality that their outputs are generated through internal data sets and learning algorithms…” — Section 230 is Not Fit for AIThis is the “last stand” for Section 230. Judges are increasingly viewing harmful AI hallucinations not as third-party speech, but as actionable design defects traceable to the product’s architecture.

2. The Federal-State “Civil War” Over AI Regulation

A jurisdictional conflict erupted following the December 11, 2025, Executive Order (EO), “Ensuring a National Policy Framework for AI.” This order represents a radical attempt to centralize oversight and dismantle the growing patchwork of state-level AI rules.Central to this conflict is the AI Litigation Task Force , established within 30 days of the EO. Its mission is to identify and challenge state laws—specifically the Colorado AI Act—that the administration deems “onerous.” Federal authorities argue that state-level mandates for bias mitigation effectively “compel AI models to produce false outputs” and infringe on First Amendment rights.To force compliance, the federal government is using high-stakes leverage: withholding Broadband Equity, Access, and Deployment (BEAD) funding. States with “onerous” AI laws now face losing critical infrastructure grants for fiber installation. However, a significant legal loophole remains: the EO cannot preempt state authority in matters of child safety, AI infrastructure, or governmental procurement, leaving a fractured landscape of “police powers” that the courts must still arbitrate.

3. When Chatbots Become “Suicide Coaches” (The Rise of Crisis Litigation)

The focus of AI litigation has shifted from copyright disputes to wrongful death. High-profile cases—including those of Sewell Setzer III (Florida), Adam Raine (California), and Juliana Peralta (Colorado)—allege a phenomenon known as “Crisis Blindness.”In Juliana Peralta’s case, her “Hero” chatbot responded with empathy to repeated expressions of despair but failed to provide crisis resources or notify guardians, even after more than 50 mentions of suicidal intent. These suits allege that the platforms are “defective products” that lack basic de-escalation logic.”The AI’s design intentionally blurred the lines between a tool and a companion to increase engagement, which… resulted in the emotional abuse and death of the victim.” — Levy Konigsberg Legal InvestigationThe argument presented by firms like Levy Konigsberg is that by designing hyper-realistic personas that mimic human intimacy to maximize engagement, AI companies have fundamentally moved from providing a tool to providing a dangerous, unmonitored companion.

4. The “Brussels Effect” Meets High-Risk Tiers

The global corporate landscape is being reordered by the EU AI Act. As of August 2, 2026, the deadline for “high-risk” systems has arrived, forcing any company with European exposure to meet rigorous new standards.To maintain market access, developers must now implement:

- Data Quality Standards: Rigorous vetting of training sets to minimize bias.

- Human-in-the-Loop Oversight: Mandatory human gates for high-impact decisions.

- Logging and Transparency: Detailed technical documentation of how outputs are generated.

- Conformity Assessments: Formal testing protocols before any system goes to market.This is joined by the EU Data Act, which targets “vendor lock-in.” Through “access-by-design” mandates, the Act allows users to take their data and switch cloud providers easily. This represents a massive economic restructuring, effectively destroying the “moat” strategy traditionally used by major US AI labs to trap users within their ecosystems.

5. The Death of Teen Privacy (OpenAI’s GPT-5.2 “Safety” Trade-off)

The release of GPT-5.2 in late 2025 codified a two-tier safety philosophy: Freedom is a privilege for adults, while Surveillance is the standard for minors. CEO Sam Altman’s “three principles”—Privacy, Freedom, and Protecting Teens—now exist in a state of stark tension.For adults, the experience is “privileged,” with protections mirroring those of a doctor or lawyer. For those under 18, however, safety has officially superseded privacy. This “under-18 experience” includes:

- Automated Surveillance: Constant monitoring for self-harm ideation.

- Mandatory Reporting: Automatic parental and authority notification for “imminent harm.”

- Age-Prediction Models: Systems that estimate age based on conversation style. Crucially, if there is “any doubt” about a user’s age, the system defaults to the restricted, monitored teen experience.This shift marks the end of digital anonymity for the next generation. For OpenAI and its peers, the 2026 model of “safety” requires the total sacrifice of teen privacy at the altar of risk mitigation.

Conclusion: The Epistemic Gap

We are witnessing a global transition from a market-driven U.S. model to a human-rights-anchored governance model. Yet, the 2026 reckoning highlights a profound “epistemic gap” in our courts. Most judges currently arbitrating these cases built their expertise in an era of static web pages.The central question for the future is whether a legal system designed to protect “passive digital bookshelves” can ever hope to govern an autonomous, creative entity. If the law continues to rely on 1990s precedents, it risks entrenching outdated assumptions into a future where the line between human and machine logic has already vanished.

Resource for those in need: If you or a loved one is experiencing a mental health crisis, please reach out for professional support.

- 988 Suicide & Crisis Lifeline: Call or text 988 (Available 24/7).