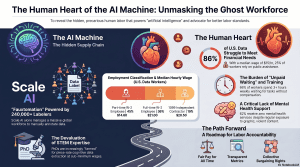

April 18, 2026 /Mpelembe Media/ — These sources analyze the shift toward precarious labor and contracting within the modern economy, with a particular focus on the technology sector. One report highlights the exploitation of data workers in the United States, revealing that those who train artificial intelligence often face low wages, unstable hours, and a lack of essential mental health benefits. Parallel research examines the broader gig economy, noting that while some high-skilled professionals choose independent contracting for its autonomy, many others are forced into these roles by restructuring or a lack of traditional opportunities. This transition often results in limited employer-provided training and the erosion of job security, creating a “race to the bottom” for workers across various demographics. Ultimately, the collection illustrates how algorithmic management and subcontracting are redefining the relationship between firms and employees, often prioritizing corporate flexibility and profits over worker stability.

The Invisible Billion: The Human Truth Behind the AI “Automation” Myth

In the high-gloss corridors of Mountain View and Redmond, the narrative of Artificial Intelligence is sold as a miracle of pure, frictionless silicon—a “superintelligence” that has finally dispensed with the messiness of human touch. But pull back this “Silicon Curtain,” and the gears of the machine are revealed to be made of flesh and blood. Behind the $14.3 billion investments and the seamless chatbot responses lies a global supply chain of “ghost workers”: hundreds of thousands of individuals training, testing, and maintaining the models that are marketed as fully autonomous.The industry thrives on an optical illusion. Take the “Amazon Go” stores, once hailed as a “Just Walk Out” technological triumph. In reality, the system relied on a hidden workforce in India to remotely monitor shoppers, ensuring the checkout process functioned while the public marveled at the “automation.” This is the foundational lie of the AI boom: the brilliance of the tool is often just a human imitator hiding in the code.

Takeaway 1: “Artificial” Intelligence is a Human-Powered Illusion

The industry term for this deception is “AI Fauxtomation.” While tech giants market their systems as self-evolving entities, they are fundamentally dependent on human “imitators,” “verifiers,” and “experts” to perform the cognitive heavy lifting software cannot yet grasp.The CWA report details a rigid hierarchy of human labor required to keep the lights on:

- Data Collection & Annotation: Workers manually label, filter, and organize the raw data (images, text, audio) that allows models to learn.

- Content Moderation: Acting as “digital first responders,” these workers trawl through graphic violence and hate speech to sanitize digital spaces.

- Model Training: Through Reinforcement Learning from Human Feedback (RLHF), workers provide the signals necessary to align AI responses with human preferences.

- Model Evaluation: “Red-teaming” involves workers stress-testing models with provocative prompts to uncover “hallucinations” or toxic biases.As Kirn Gill II, a Search Quality Rater, observes:“If there’s anything I wanted the general public to know, it is that there are low paid people who are not even treated as humans—just little more than employee ID numbers—out there making the 1 billion dollar, trillion dollar AI systems.”

Takeaway 2: The Trillion-Dollar Industry’s $15-an-Hour Secret

There is a staggering economic chasm between the record profits of Alphabet, Microsoft, and Amazon and the precarious lives of the workers building their engines. This disparity is maintained through “fissured workplaces”—a strategy where lead firms use complex webs of subcontractors (like Telus, GlobalLogic, and Scale AI) to limit their liability for employment conditions, benefits, and pensions.The economic reality for U.S. data workers is stark:

- Median hourly wage: $15 (approximately $22,620 annually).

- Public assistance reliance: 25% of workers rely on food stamps or Medicaid.

- Housing instability: 22% of workers have experienced homelessness because they could not afford rent.By treating the workforce as a variable expense managed by “shadow HR departments,” multi-trillion-dollar companies can claim high productivity while their essential labor force struggles below the poverty line, often “drowning” in bills while sitting at their computers waiting for unpaid tasks to appear.

Takeaway 3: The “Scientific Gig Economy” and the Devaluation of STEM Expertise

The gigification of labor has ascended to the highest levels of academia. As federal funding for basic science becomes increasingly volatile—causing summer stipends and fellowships to vanish—doctoral-level researchers are being forced into a “scientific gig economy.” Doctoral students in mathematics and engineering are now being “farmed” by platforms like Scale AI and Mercor to perform “mind-numbing” RLHF tasks for piece-rate compensation.This is “industrial-scale knowledge arbitrage”: the liquidation of years of public-funded expertise to train proprietary corporate models. To facilitate this, tech giants utilize “Reverse-Acquihiring”—a regulatory loophole where they poach entire research teams from startups (such as Amazon’s raid of Adept AI) without the antitrust scrutiny or the obligation to inherit institutional liabilities like employee benefits. It is a systematic plundering of STEM institutions, turning researchers into “high-end” contract labor without the security of the ivory tower.

Takeaway 4: Mental Health in the Line of Fire

The human cost of AI is most visceral in the trauma of content moderation. These workers act as the “first line of defense,” protecting millions from graphic exploitation and violence. However, the contrast between the trauma they absorb and the support they receive is harrowing.

- Exposure: 70% of workers must sign consent forms for viewing harmful content.

- Neglect: 62% of workers report receiving zero mental health support.The absurdity of corporate “care” is best captured by one Telus worker who described viewing “cats being tortured nonstop” only to receive “wellness emails” featuring an animated character offering habits rather than actual support. Many workers report complex PTSD, yet they remain silent, knowing that to complain in a fissured workplace is to risk being “let go” instantly.

Takeaway 5: Training the Machine to Replace the Trainer

Data work is defined by a cruel, self-cannibalizing irony: employees are paid to train the very models designed to automate their own jobs. This creates a “knowledge-factory” environment where workers feel like interchangeable parts on a five-minute timer.

- 52% of workers believe they are training AI to replace other people’s jobs.

- 36% of workers believe they are training AI to replace their own jobs.Tahlia Kirk, a technical writer at Accenture, notes that companies are increasingly willing to accept “crappy quality” from an AI tool rather than pay for human expertise. By lowering standards to 200 style guidelines instead of 400, firms justify the removal of human writers, effectively using the workers’ own previous outputs to build a “good enough” replacement.

Takeaway 6: The Crisis of “Model Collapse” and Inaccuracy

The current labor model prioritizes speed over substance, creating an existential “quality crisis” for AI itself. Workers are governed by “Average Estimated Times” (AETs), which force them to rush through complex tasks. In a notable incident involving Google’s Gemini, workers were reportedly told to ask the AI itself for answers rather than conducting independent research to meet these tight deadlines.This creates a feedback loop of “crappy quality” that leads to Model Collapse —a phenomenon where an AI trained on recursively generated, imperfect data eventually degrades into uselessness.The Three Biggest Inefficiencies in AI Training:

- Inadequate Training: 36% of workers find training ineffective; 87% of workers are regularly assigned tasks for which they are not prepared.

- Unclear Metrics: 86% of workers find the metrics used to evaluate them are vague or unclear.

- Time Pressure (AETs): Over half of workers report that time estimates are too short to allow for accurate work.

Conclusion: A Call for Accountability

The “Invisible Billion” are beginning to find their voice. Through the Alphabet Workers Union (AWU-CWA), data workers are demanding fair wages, transparency in evaluation, and the right to collective bargaining. They have already secured modest victories, such as the first-ever pay raises for Google Raters, but the path to a responsible AI industry requires more than voluntary corporate ethics.It requires policymakers to close the loopholes of “Reverse-Acquihiring” and joint-employer liability. As we marvel at the “superintelligence” of our new tools, we must ask ourselves: are we willing to accept that the “intelligence” we admire is built on a foundation of structural exploitation and human trauma?When we marvel at the “intelligence” of our tools, are we willing to look at the human cost hidden in the code?