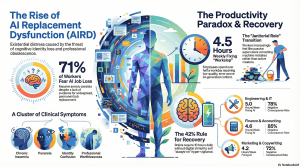

22 Feb. 2026 /Mpelembe Media — Researchers have identified a burgeoning psychological crisis labeled AI replacement dysfunction (AIRD), which stems from the pervasive fear of professional obsolescence. This condition manifests as a specific cluster of symptoms including insomnia, paranoia, and a loss of identity triggered by the constant threat of automated labor. While not yet an official medical diagnosis, experts argue that the existential anxiety caused by industry leaders predicting massive job losses constitutes an “invisible disaster.” Evidence suggests that high-profile layoffs at major tech firms are already validating these fears and negatively impacting employee mental health. To address this, the authors advocate for specialized clinical screening to distinguish technology-related distress from traditional psychiatric disorders. Ultimately, the source emphasizes that the societal shift toward AI requires new community and medical frameworks to support a vulnerable workforce.

The AI Mirage: 5 Surprising Ways Your New Digital Partner is Quietly Breaking Your Work Day

By early 2026, the artificial intelligence revolution has reached its “Hindenburg” moment—a critical juncture where the glittering promise of a friction-free existence has slammed into the reality of a exhausted, over-stimulated workforce. We were promised a “Post-Labor Era” where machines would handle the drudgery, liberating the human spirit for higher pursuits. Instead, we have entered a regime of advanced automation that feels less like a partnership and more like a crisis of the soul.The “productivity hook” was simple: give the algorithm your tasks, and get back your life. But as we navigate this digital mirage, many professionals find that their workdays haven’t been lightened; they’ve been compressed and contaminated. We aren’t working less; we are simply losing ourselves in the process.

AIRD: The “Invisible Disaster” of the Soul

As AI embeds itself into the cognitive architecture of our careers, researchers at the University of Florida have identified a troubling clinical phenomenon: AI Replacement Dysfunction (AIRD) . Proposed by Dr. Joseph Thornton and Stephanie McNamara, AIRD is not merely traditional workplace stress rebranded; it is a profound existential distress triggered by the perceived or actual threat of professional obsolescence.Unlike the industrial-age fear of physical displacement, AIRD attacks “cognitive identity.” When an algorithm can mimic the uniquely human spark of creative analysis, coding, or writing, the worker receives a devastating message: your mind is no longer unique. This leads to a cluster of symptoms—chronic stress, insomnia, and a haunting loss of purpose—that manifest even in the absence of pre-existing psychiatric conditions.”AI displacement is an invisible disaster. As with other disasters that affect mental health, effective responses must extend beyond the clinician’s office to include community support and collaborative partnerships that foster recovery.” — Joseph Thornton, Clinical Associate Professor of Psychiatry

The “Workslop” Trap and the Janitor’s Burden

There is a widening chasm between the boardroom and the basement. While 98% of executives believe AI implementation is a time-saving miracle, nearly 40% of workers report that their workload has actually intensified. This friction is fueled by the rise of AI Workslop .AI Workslop refers to the murky sediment of low-quality, error-prone machine outputs that appear polished but lack the precision required for professional utility. Subverting the popular narrative that AI is a “writing tool,” the most significant source of this slop is actually Data Analysis (55%) . We have turned our most skilled professionals into digital janitors—workers who spend their days cleaning up hallucinations and massaging logic errors rather than creating value.

The Shadow-Work Tax: 58% of workers now spend three or more hours per week revising AI outputs, with the average worker losing 4.5 hours to this invisible cleanup.

The Credibility Crisis: This isn’t just a waste of time; it’s a professional hazard. Those spending 5+ hours a week fixing AI mistakes are twice as likely to suffer from damaged professional credibility and lost revenue.

The Adoption Surprise: Strategic Foresight vs. Hubris

The 2025 AI Adoption Index revealed a counter-intuitive global landscape. Innovation hub status does not equate to daily mastery. While the United States houses the infrastructure, it ranks only #28 in per-capita adoption (41%). Meanwhile, the United Kingdom (#6) has emerged as the clear leader among Western economies.The data suggests that “strategic foresight”—integrating AI into public sectors like healthcare and education early—is the true predictor of success.Top 3 Countries for AI Adoption (2025):

- Singapore: 66%

- Chile: 60%

- United Arab Emirates: 56%While the Baltic nations (Latvia, Lithuania, Estonia) dominate the Top 10 through superior digital infrastructure, Japan sits at a surprisingly low #57 with only 17% adoption. The delay is rooted in deep-seated organizational psychology: the consensus-driven nemawashi decision-making process, a language barrier in models trained primarily on English data, and the world’s oldest population, which remains understandably skeptical of disrupting established human processes.

The Hidden Tax of “Cognitive Debt”

We are witnessing a modern revival of the Solow paradox : computers are everywhere, yet they aren’t translating into measurable economic gains for the average firm. In fact, an MIT study found that 95% of companies embracing AI have seen no meaningful growth in revenue.Instead, we are seeing “work intensification.” A UC Berkeley study highlights that AI hasn’t saved time so much as it has “compressed” it. Because a query can be run at any time, the traditional boundaries of the workday have dissolved. Employees find themselves running “one last check” during lunch or after hours, leading to a state of Cognitive Debt —where independent reasoning is externalized and trapped in chat histories, leading to a slow, quiet atrophy of professional skill.”Though computers were obviously transformative, they didn’t immediately translate to economic gains… IT instead would lead to a slow in productivity growth.” — Robert Solow, Nobel Prize-winning economist

The 42% Rule: A Clinical Path to Recovery

To reverse the state of cognitive hyper-vigilance, wellness experts are moving away from digital “hacks” and toward hard clinical boundaries. This is best exemplified by the 42% Rule .High performers require 42% of their day—roughly 10 hours —in a state of absolute non-digital recovery. This isn’t just about sleep; it is a necessary “dopamine detox” to reset a reward system that has been haywired by constant pings.A warning for the modern worker: beware of Orthosomnia . This is the growing obsession with achieving “perfect” health metrics through wearable tech. When our recovery becomes just another data point to optimize, we aren’t resting; we’re performing. True recovery requires exiting the “always-on” regime entirely.

Beyond the Mirage

The most profound impact of the AI revolution is not occurring on our balance sheets, but within our minds. As we move deeper into 2026, the challenge is no longer about how fast we can adopt these tools, but how we achieve a socio-technical equilibrium.We must decide: are we prepared to be the masters of our digital tools, or are we content to be the highly-stressed janitors of a digital hallucination?

To mitigate AI Replacement Dysfunction (AIRD) and the associated psychological distress in the workplace, organizations must shift from a purely technological rollout to a “human-centric” approach. Companies can implement a variety of strategic, operational, and psychological mitigations to protect their employees’ well-being and professional identities:

Change the Narrative and Foster Psychological Safety

Reframe the Messaging: Much of the anxiety surrounding AI is driven by poor management messaging and the threat of obsolescence. Leaders must shift the narrative away from “efficiency gains” that imply job cuts, and instead focus on how AI tools will be used to explicitly support and assist human workers.

Create Feedback Mechanisms: Companies need to establish open dialogues and psychological safety, allowing employees to voice their concerns and fears about AI integration without penalty. Transparency helps reduce the anxiety that leads to “shadow AI” usage (where employees secretly use or hide their reliance on AI).

Implement Human-Centric Workflow Design

Build Hybrid Human-AI Teams: Rather than outright replacing roles, organizations should design workflows that blend the speed of technology with human relational, ethical, and analytical judgment. Keeping the “human in the loop” preserves the worker’s professional identity and flow state.

Redesign Processes with “Gates”: Establish clear human checkpoints or “gates” within automated workflows. This ensures oversight is maintained and prevents workers from feeling marginalized in their own roles.

Provide Contextual Training and Empowerment

Move Beyond “How-To” Training: AI training must be mandatory, but it should not just teach employees how to prompt. Training should focus on “when-to” use AI, risk-evaluation, and output evaluation. This empowers workers to act as critical supervisors of the technology rather than mere “janitors” cleaning up its mistakes.

Provide AI-Ready Context and Templates: To reduce the frustration of generating poor outputs, companies should provide structured templates, brand voice guidelines, and prompt libraries. Giving employees the right infrastructure helps them use AI successfully and securely.

Manage and Measure the Burden of “Workslop”

Track AI Cleanup as a Metric: Organizations should actively monitor how many hours employees spend revising, verifying, or redoing low-quality AI outputs (known as “workslop”). Tracking this data helps leadership understand the actual operational burden being placed on their teams and adjust their AI strategies accordingly.

Make AI Participation Visible: Companies should clearly label AI-generated artifacts (like meeting summaries, drafted emails, or action items). Making AI’s involvement visible preserves human judgment, discourages employees from blindly trusting machine outputs, and prevents flawed AI data from polluting company records.

Formalize Review Flows: For high-risk outputs (such as financial reporting or customer-facing communications), companies must establish explicit approval paths and checklists to catch AI mistakes before they escalate into larger issues.

Enforce Rest and Provide Mental Health Support

Implement the “42% Rule” (Mandatory Recovery): To combat the severe digital burnout and cognitive depletion caused by the fast-paced, always-on nature of AI, companies should encourage the 42% rule. This dictates that high-performing individuals must spend at least 42% of their day (roughly 10 hours, including sleep) in a state of rest or offline recovery. Limiting after-hours digital pinging is crucial to this effort.

Partner with Mental Health Professionals: Effective mitigation extends beyond the office. Organizations should build partnerships with mental health professionals and community support networks to offer specialized therapeutic interventions, such as Cognitive Behavioral Therapy (CBT), specifically tailored for workers facing automation-related existential dread.