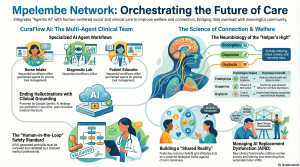

April 9, 2026 /Mpelembe Media/ — The Mpelembe Network is a multifaceted digital collaborative platform built on Google Cloud that integrates artificial intelligence with social, health, and community services. The network facilitates specialized initiatives like CuraFlow AI, which uses multi-agent systems to orchestrate clinical care, and the Justina Mutale Foundation, which promotes gender equality and STEM education for African women. Central to the platform’s philosophy is the “helper’s high,” a neurobiological concept suggesting that altruism and volunteering are essential for mental resilience and physical longevity. Beyond technology, the materials examine complex social issues such as loneliness, psychological trauma, and the economic challenges of providing care in aging urban environments like Richmond upon Thames. Collectively, these sources present a vision for a 2026 “Agentic Era,” where high-fidelity AI and human collaboration bridge the gap between social isolation and meaningful community support.

The Agentic Era: 6 Surprising Ways AI and Altruism are Redefining Our Future

1. Introduction: The Paradox of the Connected Age

We are currently navigating a high-stakes paradox: an unprecedented abundance of data paired with an exhausting deficit of time and meaningful connection. In our modern ward of digital noise, the administrative burden of synthesis often eclipses actual care. As a Digital Anthropologist, I see 2026 as a pivotal “Agentic Era”—a time where technology is shifting from reactive chatbots to proactive orchestration.At the heart of this transition is the Mpelembe Network . Founded by Sam Mbale, the network’s architecture is inspired by his background in civil engineering, specifically his work on the Thames Water London Ring Main . Just as that 50-mile tunnel serves as a vital public utility to meet London’s growing thirst, the Mpelembe Network is designed as a future-proofed digital utility. It orchestrates healthcare, social support, and community links to ensure that human welfare scales alongside technological innovation. This is the era where AI doesn’t just answer questions; it reconstructs the very fabric of community.

2. The “Helper’s High” is a Biological Life Hack

Our biology is effectively hard-wired for service. Altruism is not merely a moral choice; it is a neurobiological imperative for mental resilience. Research confirms a physiological response known as the “helper’s high,” where prosocial behavior triggers the brain’s reward systems. To thrive in 2026, we advocate for the “80/20 rule” of wellbeing: 80% eudaimonic activity (meaning-based growth and contribution) and 20% hedonic pleasure (fleeting, instinctive enjoyment).The science of doing good is your brain’s secret weapon. Eudaimonic traits are associated with enhanced functional connectivity in the Default Mode Network (DMN) and the Medial Prefrontal Cortex , the regions responsible for our sense of “true self.” When we engage in acts like those seen in Vineet Bhatnagar’s Shine charity or the Justina Mutale Foundation , our brains release a vital neurochemical cascade:

- Endorphins: Natural painkillers that induce euphoria and reduce the physiological burden of stress.

- Dopamine: Links altruistic acts to reward circuits, reinforcing the drive for social connection.

- Oxytocin: The “bonding hormone” that fosters trust, empathy, and lowers mortality rates.”Helping others isn’t a sacrifice of your wellbeing; it is the very foundation of it.” — Alicia Hixon

3. Multi-Agent Orchestration: Why the Future of Medicine isn’t a Chatbot, but a Team

In the clinical landscape of 2026, generalization is the enemy of precision. This is why we are seeing a shift from “Autopilot to Co-Pilot” through systems like CuraFlow AI . This platform recognizes that clinical excellence requires a division of labor, much like a physical medical team.In regions like Richmond upon Thames—which acts as a “demographic time machine” due to its rapidly aging 80+ population—this orchestration is a logistical necessity. CuraFlow AI utilizes a sequential workflow of specialized agents to reclaim a doctor’s cognitive bandwidth:

- Nurse Intake Agent: Extracts and summarizes patient history.

- Diagnostic Lab Agent: Conducts real-time, peer-reviewed research.

- Clinical Protocol Agent: Monitors medications and vitals (BPM, temp) via real-time dashboards.

- Patient Educator Agent: The “empathetic core” that translates clinical jargon into compassionate instructions.Crucially, the “Human-in-the-Loop” safety standard remains non-negotiable; all AI-generated protocols must be validated by a licensed professional. By decoupling administrative synthesis from clinical intuition, we restore eye contact at the bedside.

4. The End of Hallucinations: Anchoring AI in “Clinical Grounding”

The primary friction point for AI adoption has been “hallucinations”—factually incorrect data generation. To solve this, the Google Gemini engine employs “Clinical Grounding.” This creates a “computational audit trail,” transforming AI from a generative black box into a transparent research partner.| FACT CHECK: The Diagnostic Lab Agent || —— || Source Authority: The Diagnostic Lab agent does not “guess.” It utilizes Gemini’s native integration with Google Search to anchor every finding in real-time, peer-reviewed medical literature. || Verification: Every clinical suggestion includes a verifiable audit trail of citations, ensuring that the evidence base is transparent for medical oversight. |

5. AI Replacement Dysfunction (AIRD): The New Mental Health Frontier

As AI becomes more agentic, we face an “invisible disaster” known as AI Replacement Dysfunction (AIRD) . Emerging during the “crisis decade” (2026–2035), this condition stems from the existential anxiety of professional obsolescence.Researchers like Stephanie McNamara and Joseph Thornton have documented that AIRD manifests differently than traditional anxiety. It often involves “high-performance masking,” where individuals perform success while feeling hollowed out by fear. Symptoms include:

- Insomnia and Paranoia: Persistent dread regarding economic instability.

- Crisis of Self-Concept: A profound loss of identity as automated labor replaces career roles.

- Affective Blunting: A protective emotional withdrawal from a world that feels increasingly transactional.Distinguishing this technology-related distress from traditional psychiatric disorders is essential for supporting our vulnerable 2026 workforce.

6. “Shared Reality” is the Ultimate Buffer Against Loneliness

Modern life often forces a distinction between objective social isolation and the subjective feeling of loneliness. In busy cities, “stimulus overload” causes people to withdraw, leading to affective blunting . You can be lonely in a crowd because you lack a “Shared Reality” —the perception of common feelings and beliefs with another person.Loneliness is not a minor inconvenience; it is as damaging to health as smoking 15 cigarettes a day . The Mpelembe Network addresses this by creating “lifelong links” rather than social media “likes.” By facilitating “AI for Good” programs—such as Justina Mutale’s $10m Presidential STEM Fellows Program —the network fosters the genuine connection required to reduce uncertainty and find meaning.

7. Practical Wisdom: Habit Stacking Your “Micro-Gives”

Reclaiming your biological baseline doesn’t require leading a global NGO. Peer Support Supervisor Joe Jefferies suggests “micro-gives” —small, impactful actions that boost social capital. To make these sustainable, use Habit Stacking to pair them with existing routines:

- The Morning Coffee Stack: Send one supportive text message to a friend while your coffee brews.

- The Commute Stack: Give a sincere, genuine compliment to a colleague or stranger.

- The Social Media Stack: Leave one positive, encouraging comment instead of a passive “like.”

- The Entryway Stack: Hold the door open with a genuine smile and eye contact.As social entrepreneur Alicia Hixon notes, the motto for a resilient life is simple: “Do good and greater will follow you.”

8. Conclusion: Redefining Wealth in 2026

In the Agentic Era, wealth is no longer defined by what we accumulate, but by what we contribute. AI is evolving into a sophisticated “co-pilot” for human welfare, organizing the complexity of our data so that we can return to the work only humans can do: providing empathy and intuition.We should not measure the success of technology by the speed of its processors, but by the minutes of eye contact it restores between us. When we align our daily actions with our hard-wired biological drive for service, we do more than help others—we rewire ourselves for a purposeful existence.Closing Question: If your brain is hard-wired for service, what is one “micro-give” you can stack onto your morning routine tomorrow?