Tag Archives: Natural language processing

Integrating AI Agents with Google Workspace via CLI and MCP

The AI Brain Meets the Real World: A Guide to Function Calling

March 8, 2026 /Mpelembe Media/ — The provided sources describe the emergence of AI agents designed to automate productivity tasks within the Google Workspace ecosystem. A central development is the release of gws, an open-source command-line interface that unifies various Google APIs into a single, machine-readable format. This tool allows large language models to interact directly with Gmail, Calendar, and Drive by providing structured JSON outputs and pre-built agent skills. Technical tutorials illustrate how developers can use the Vercel AI SDK and Model Context Protocol (MCP) to build assistants capable of managing schedules and conducting web searches. Furthermore, the integration of the Gemini CLI with tools like Google Sheets highlights a shift toward natural language data automation. Together, these resources mark a transition from manual API management to autonomous agentic workflows powered by generative AI. Continue reading

Stop Guessing Your Prompts: 4 Game-Changing Lessons from the Vertex AI Prompt Optimizer

Maximizing AI Accuracy: Automating Workflows with the Vertex AI Prompt Optimizer

23 Feb. 2026 /Mpelembe Media/ — The Vertex AI Prompt Optimizer is a tool designed to refine AI instructions automatically using ground truth data. By comparing initial outputs against high-quality examples, the system iteratively adjusts system prompts to achieve greater accuracy and consistency. The author illustrates this process through a Firebase case study, where the tool was used to transform rough video scripts into professional YouTube descriptions. Although the optimization process requires an upfront investment in time and tokens, it significantly reduces the need for manual human intervention. Ultimately, the source highlights how data-driven optimization can replace trial-and-error prompting with a more reliable, automated workflow. Continue reading

The Molecular Structure of Thought: Why You Can’t Just “Copy-Paste” AI Reasoning

Feb 22, 2026 /Mpelembe media/ — This research explores the structural stability of Long Chain-of-Thought (CoT) reasoning in large language models by using a chemical bond analogy. The authors identify four primary reasoning behaviors—normal operation, deep reasoning, self-reflection, and exploration—which act as “bonds” that stabilize the logical progression of a model. By applying mathematical modeling and Gibbs–Boltzmann energy distributions, the text demonstrates how self-correction and hypothesis branching prevent “hallucination drift” and ensure self-consistency. Comparative testing across various models, such as LLaMA and Qwen, reveals that high structural correlation between reasoning chains is necessary for maintaining performance. The study also utilizes Sparse Auto-Encoders and t-SNE visualizations to map the geometric compactness of these thought processes in embedding space. Ultimately, the findings suggest that semantic compatibility and rigid cognitive architectures determine a model’s ability to solve complex mathematical and scientific problems. Continue reading

The Fluid Future: A Learner’s Guide to Adaptive and Liquid AI Architectures

The Fluid Future: A Learner’s Guide to Adaptive and Liquid AI Architectures

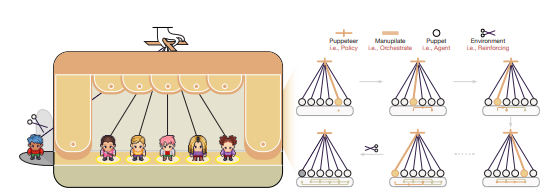

Dynamic Agent Orchestration: The Puppeteer Paradigm

Dec. 02, 2025 /Mpelembe Media/ — The academic paper introduces a novel framework for coordinating complex problem-solving in Large Language Model (LLM)-based multi-agent systems. To address the inherent inefficiencies of traditional static agent structures, the authors propose a “puppeteer-style” paradigm where a central orchestrator dynamically selects and sequences agents based on the evolving task state. This centralised orchestrator policy is continuously optimised using reinforcement learning (RL), leveraging a tailored reward function that explicitly balances solution quality with computational efficiency. Empirical results across various closed- and open-domain scenarios demonstrate that this adaptive approach achieves superior performance compared to existing methods while concurrently reducing token consumption. Finally, analysis of the evolving collaboration patterns confirms that the RL-driven policy leads to the emergence of highly compact and cyclic reasoning structures. Continue reading

The value of thought. How human-AI collaboration is measured economically

This touches on how large language models (LLMs) operate! tokenization is the fundamental process in natural language processing (NLP) of breaking down raw text into smaller units called tokens, such as words, subwords, or characters. This is a crucial first step that transforms unstructured text into a structured format that machine learning models can process.

People trust legal advice generated by ChatGPT more than a lawyer – new study

Eike Schneiders, University of Southampton; Joshua Krook, University of Southampton, and Tina Seabrooke, University of Southampton

People who aren’t legal experts are more willing to rely on legal advice provided by ChatGPT than by real lawyers – at least, when they don’t know which of the two provided the advice. Continue reading

NotebookLM: Features and Use Cases

Jan. 01, 2024 /Mpelembe Media/ — NotebookLM is an experimental tool by Google that helps you understand and work with information from your notes and documents. It uses AI to summarize, answer questions, and generate new insights from your content.

How do you start a sentiment analysis project?

June 16, 2023 /Developers/ — Sentiment analysis is the process of determining the emotional tone of a piece of text. It is a subfield of natural language processing (NLP) that deals with identifying and extracting subjective information from text. Sentiment analysis is often used to understand customer sentiment, brand reputation, and social media trends.

There are two main types of sentiment analysis: Continue reading