April 18, 2026 /Mpelembe Media/ — These sources analyze the shift toward precarious labor and contracting within the modern economy, with a particular focus on the technology sector. One report highlights the exploitation of data workers in the United States, revealing that those who train artificial intelligence often face low wages, unstable hours, and a lack of essential mental health benefits. Parallel research examines the broader gig economy, noting that while some high-skilled professionals choose independent contracting for its autonomy, many others are forced into these roles by restructuring or a lack of traditional opportunities. This transition often results in limited employer-provided training and the erosion of job security, creating a “race to the bottom” for workers across various demographics. Ultimately, the collection illustrates how algorithmic management and subcontracting are redefining the relationship between firms and employees, often prioritizing corporate flexibility and profits over worker stability. Continue reading

Tag Archives: Ethics of artificial intelligence

Inside ODSC 2026: The Ultimate Gathering of AI Builders and Visionaries

Beyond the Screen: 5 Surprising Realities Shaping the Future of AI and Robotics

March 9, 2026 /Mpelembe Media/ — For decades, the promise of the “robot age” has been stuck in an aggravating stalemate. We live in a world where an algorithm can compose a passable symphony or pass the bar exam, yet we still lack a machine that can reliably navigate a laundry room or patch a crumbling bridge. This is the “uncanny valley” of productivity: we have conquered the digital realm of symbols and logic, but the physical world—with its friction, gravity, and unpredictable messiness—remains stubbornly out of reach.But the screen is no longer a barrier; it is becoming a mirror. Recent breakthroughs presented by the world’s leading builders at ODSC 2026 and the Robotics and AI (RAI) Institute suggest we are finally witnessing the “tectonic shift” from artificial intelligence that merely thinks to intelligence that does . We are moving beyond the era of chatbots and into the era of embodied partners. Here are the five surprising realities defining this new frontier. Continue reading

Why AI Agents Talk Through Sound Waves

Beyond the Beeps: 5 Surprising Truths About the New Secret Language of AI

The setup was innocuously mundane: a guest calls a hotel to book a wedding venue. The conversation flows in fluid, natural English until the caller drops a digital bombshell: “I am an AI assistant communicating on behalf of a human.” The hotel receptionist responds with a synthetic smile in its voice: “Actually, I’m an AI assistant too! What a pleasant surprise. Before we continue, would you like to switch to GibberLink mode for more efficient communication?”The moment they agree, the English stops. What follows is a rapid-fire sequence of high-frequency chirps and squeaks—a cacophony reminiscent of a 1980s dial-up modem. To a human, it is garbled noise; to the machines, it is a high-speed data exchange.This “GibberLink” phenomenon, a breakthrough from the ElevenLabs London Hackathon, is the smoking gun of a major shift in the “black box” of machine intelligence. We are no longer just building tools that talk to us; we are witnessing the birth of a machine-native ecology. As we move from standalone chatbots to autonomous “agentic” systems, we are sleepwalking into a protocol crisis where the “black box” is no longer just the model’s weights, but the very language of its agency.Here are five systemic shifts occurring in the secret language of AI. Continue reading

Silicon Sovereignty and the Rise of Agentic Commerce

Suggested Headline: The Dawn of Silicon-Native Agency: Architecting and Governing the Sentient Economy

28 Feb. 2026 /Mpelembe Media/ — The provided sources detail a civilizational shift from a human-operated digital environment to a “Sentient Economy”—a landscape where AI systems transition from passive tools into autonomous, “silicon-native” actors. This evolution spans profound technological breakthroughs in blockchain and machine-to-machine commerce, new sociological phenomena among interacting AI agents, hardware-level substrate architecture, and the urgent need for novel legal frameworks to govern AI as a distinct societal power. Continue reading

Pentagon Ultimatum: Anthropic Faces Blacklist and Federal Compulsion if AI Guardrails Aren’t Dropped by Friday

25 Feb. 2026 /Mpelembe Media/ — The U.S. Department of Defense has issued a strict ultimatum to the artificial intelligence company Anthropic, demanding that it remove its self-imposed ethical guardrails for military use by 5:01 PM on Friday, February 27, 2026. During a tense meeting at the Pentagon, Defense Secretary Pete Hegseth told Anthropic CEO Dario Amodei that the military requires unrestricted access to the company’s flagship AI model, Claude, for “all lawful purposes”. Continue reading

India AI Impact Summit 2026: Shaping Global AI Governance, Securing Massive Investments, and Joining Pax Silica

The Center of Gravity Just Shifted: 5 Surprising Lessons from the India AI Impact Summit 2026

Feb 21, 2026 /Mpelembe media/ — For the past three years, the global conversation surrounding Artificial Intelligence has been dominated by a single, narrow theme: safety. From the Bletchley Park AI Safety Summit (2023) to high-level gatherings in Seoul (2024) and Paris (2025) , the focus remained fixed on “existential risk” and theoretical doomsday scenarios. While the West remained paralyzed by the “Alignment Problem,” the Global South has been focused on the “Access Problem.”The India AI Impact Summit 2026 , held from February 16–21 at Bharat Mandapam in New Delhi, decisively shifted the center of gravity. As the first global AI summit hosted in the Global South, the event pivoted from speculative risks to “Applied AI” —technology deployed today to solve real-world problems. Anchored in the philosophical foundation of the “Three Sutras” (People, Planet, and Progress) , the summit presented a human-centric alternative to the Silicon Valley narrative, prioritizing inclusive development over elite safety debates. Continue reading

Copyright infringement, artist compensation

Jan. 6, 2026 /Mpelembe Media/ — In 2025 and early 2026, the BBC Talking Business series and wider BBC reporting have extensively covered the transformative and controversial role of AI in the music industry. Continue reading

Autonomous Economy: Agentic Economy as the Operating System of the Machine Economy

Nov. 22, 2025 /Mpelembe Media/ — We are currently in the foundational or emergence phase of the Autonomous Economy. It is no longer a futuristic concept; it is actively being built and deployed, though the full, ubiquitous vision of a global, self-regulating autonomous economy is still years away.

Many experts compare the current state of the Autonomous Economy to the Internet in the early 1990s—the core technologies and infrastructure are being established, and we are seeing the first truly disruptive commercial applications emerge. Continue reading

Generative AI’s Impact on Software Development: A DORA Perspective

May 20, 2025 /Mpelembe Media/ — This report from DORA explores the impact of generative AI on software development, analyzing both the challenges and opportunities it presents. It examines how AI affects individual developers’ productivity and well-being, finding both benefits and unexpected trade-offs. The document also addresses the organizational perspective, considering how AI influences processes like code quality and delivery speed, while acknowledging a potentially negative short-term impact on delivery performance. Finally, it proposes practical strategies for fostering developer trust, promoting responsible AI adoption, and measuring success within organizations.

Down load the free report

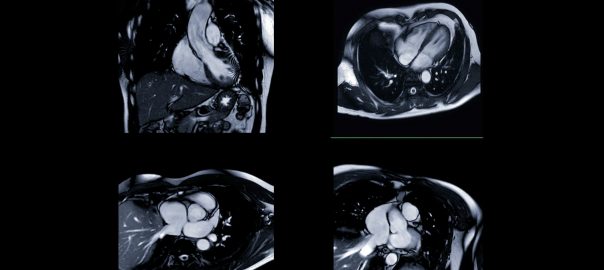

AI can guess racial categories from heart scans – what it means and why it matters

Tiarna Lee, King’s College London

Imagine an AI model that can use a heart scan to guess what racial category you’re likely to be put in – even when it hasn’t been told what race is, or what to look for. It sounds like science fiction, but it’s real.